Commander #1: We’ve analyzed their attack, sir, and there is a danger. Should I have your ship standing by?

Gov. Tarkin: Evacuate? In our moment of triumph? I think you overestimate their chances.

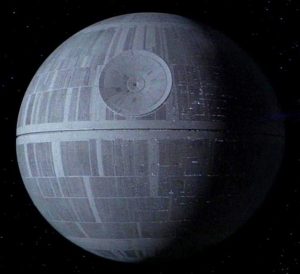

– Quote from the film Star Wars IV (1)

The Star Wars film epic in addition to providing good entertainment raises some technology issues relevant to our own time and galaxy. The latest film in the series “Rogue 1” focuses on how the rebels stole the engineering plans for the Death Star that was the plot focus for the very first film which appeared in 1977 (episode IV). What is interesting to note are the security of design issues raised in these films. Yes, the key vulnerability used to destroy the Death Star came from analysis of the stolen data obtained from the rebel operation. An overlooked flaw discovered in the design was used to destroy the most destructive weapon in history. This provides encouragement to those arguing for the importance of including security methodologies in the system design process from Day 1. A malicious actor can exploit unintended or unknown design flaws in a system if discovered. Learn an important lesson early and do not take security aspects for granted when designing and putting out a new product to market.

There is however another lesson that can be learned. In the latest installment of the Star Wars saga, we learn that the designer himself intentionally placed a flaw that could be exploited in the system. It was the intention of the designer, who did not agree with the motives of the evil emperor, to insert a flaw for an attacker to find and exploit.

Setting the film aside, one may ask, is there something relevant here to the real world? Who would intentionally place a flaw in a design that could if exploited cause damage or even hurt people? I would suggest that the possibility does exist for we have witnessed some brief glimpses that this practice has been and is applied. One brief glimpse goes back to the days of the Cold War. A former official of the Reagan administration has written about something similar that happened in his memoirs. Apparently, the secret services were able to intercept and add malicious code to control equipment on its way to the Soviet Union later causing a massive pipeline explosion in Siberia in the early 1980’s (2) . One may say that was so long ago and the leads to that event have faded with the end of the Soviet Union. Is something that happened a few years ago worth considering then? If we can assume that the revelations supplied by Mr. Snowden and news organizations like Der Spiegel are legitimate then we should think again. Reports have been published about intelligence services intercepting products and altering them before reaching their intended customers . (3) Actually the idea of putting on phone taps is quite an old one and the possibility of tampering with today’s high tech equipment to make it easier to bypass security barriers and conduct espionage or do something ever more damaging must sound like a cool thing to do.

It should not be surprising that industry also has an interest in this kind of activity. Take for example the case of the automobile manufacturing company Volkswagen (4). In this case the pressure to keep market share and increase profits seemed to be among the motivations behind the intentional placement of specially modified stealth software in the automobile control systems of its diesel cars. This case is interesting for it is reminiscent of STUXNET (malware the compromised the control systems in a nuclear enrichment facility) in the sense that control equipment was manipulated to send false data about exhaust emissions to the equipment used to inspect the automobile. We have here a further example of the erosion of trust. Can we trust that industry understands what security means? The lesson of Stuxnet has not been lost in the hacking and research communities. Chrysys labs in Hungary has been doing work exploring the possibilities of applying STUXNET methodology to other control system devices. (5)

Tampering with IT devices that may end up as part of a critical system is a dangerous activity. Especially if done by unfriendly actors and with support of resources and approval by a Government. It is just as dangerous for friendly actors to engage in this activity as well. The complexity of systems today make it very difficult to test and foresee what the consequences would be for such an operation. One wonders if the Volkswagen engineers did any extra testing of their intentional software bug on other parts of the automobile’s control systems and devices. The Siberian pipeline explosion that supposedly occurred because of the above-mentioned Cold War intelligence operation was supposedly visible from a satellite in space. One can only wonder what the effects of such an operation would look like if it occurred in today’s highly complex and integrated systems. The design lessons coming from the film “Rogue 1” are worth considering.

References

1. http://www.imdb.com/title/tt0076759/quotes

2. War in the fifth domain, http://www.economist.com/node/16478792 Accessed 2017-01-09

3. NSA reportedly planted spyware on electronics equipment

http://www.cnet.com/news/nsa-reportedly-planted-spyware-on-electronics-equipment/ Accessed 2017-01-09

4. GUILBERT GATES, JACK EWING, KARL RUSSELL and DEREK WATKINS. VW emission scandal explained. NYT http://www.nytimes.com/interactive/2015/business/international/vw-diesel-emissions-scandal-explained.html?em_pos=medium&emc=edit_el_20160428&nl=at-times&nl_art=6&nlid=4969678&ref=headline&te=1&_r=2 UPDATED January 11, 2017

5. Buttyan, Hacking cars in the style of STUXNET. http://blog.crysys.hu/2015/10/hacking-cars-in-the-style-of-stuxnet/ October 28, 2015, accessed on 2017-01-12